|

Thus we created the Sitemap Index Analyzer. A common need among web developers is to know which pages of theirs are being indexed, and thus which are not. The Thin Content Checker can analyze your site's content, let you know the percentage of unique phrases per page, and generate a histogram of page content lengths. This is because HTML is not compiled and it is a very forgiving language in terms of errors everything still works in a way, so there is no quick way to check for such errors. It can analyze your anchor text diversity and find the length shortest path to any page. HTML Validator: An HTML validator is a specialized program or application used to check the validity of HTML markup in a Web page for any syntax and lexical errors. Interested in Web Development? Try our other tools, like the Site Navigability Analyzer, which can let you see what a spider sees. Not sure if a page is excluded by your robots.txt file? The Index/No Index app will parse HTML headers, meta tags and robots.txt and summarize the results for you. If no matching rule is found, DatayzeBot assumes it is allowed to crawl the page. DatayzeBot will follow the longest matching rule for a specified page, rather than the first matching rule. To specifically allow (or disallow) the crawler to access a page or directory, create a new set of rules for " DatayzeBot" in your robots.txt file. You can get around the cap by pausing the crawler and resuming it another day.ĭatayzeBot now respects the robots exclusion standard. Since the DatayzeBot does not index or cache any pages it crawls, rerunning the Website Validator will count against your daily allowed number of page crawls. Currently the crawler is limited to 1000 pages per user per day. While the spider doesn't keep track of the contents of the pages it crawls, it does keep track of the number of requests issued by each visitor. Our spider crawls at a leisurely rate of 1 page ever 1.5 seconds. We do this with DatayzeBot, the datayze spider. In order for this tool to work, we must crawl the site or page you want analyzed. Our spider has a 1000 daily page cap, these services may have their own caps. When making changes to a template shared between pages, we recommend verifying the template is error free by validating a single page that uses the template first before rerunning the Website Validator. When making changes to a URL, use the "See Validation" on the Pages With Errors tab to check just that page rather than rerunning the Site Validator over your entire site again. We request that you be kind to the 3rd party services. It's possible these two different validators are running two different Nu HTML Checker Instances and thus may report different errors, even when validating the same page. We use these multiple validators to reduce the load on any one validator. This tool uses the web services of W3C Markup Validation Service and the HTML5 Nu HTML Checker. Keep in mind not all errors in HTML markup are equally problematic, and many errors have little to no impact on how the page is displayed to the user. Click over to the Pages With Errors tab to start tackling those problem spots.

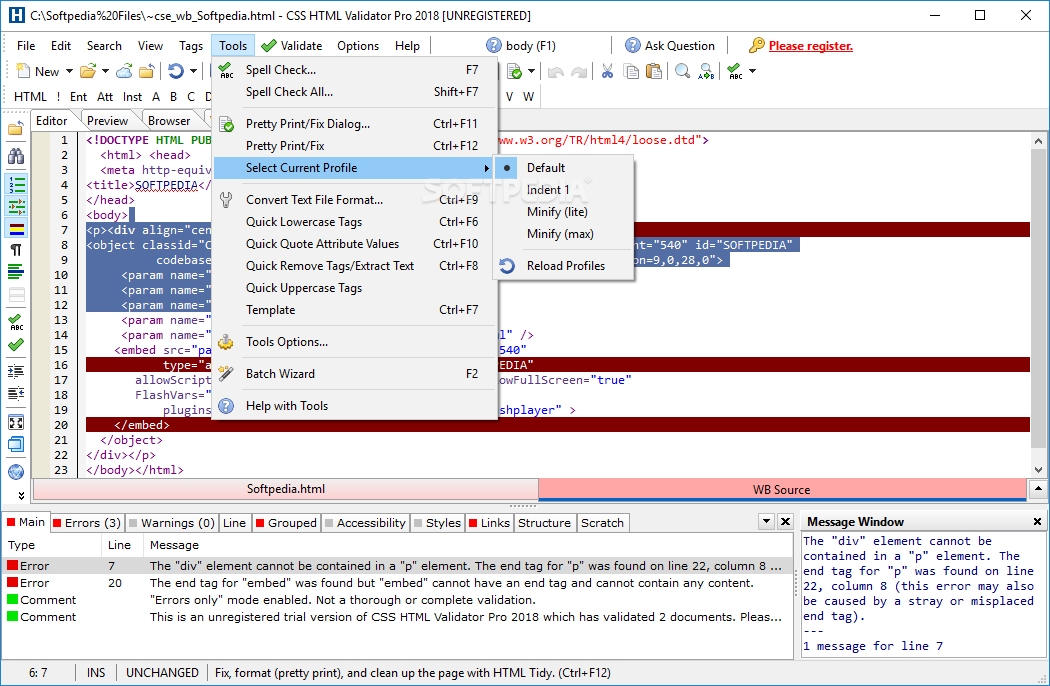

In the Error Summary tab you'll find a detailed description of all the errors encountered on your site. The Website Validator will break down errors and warnings by type, as well as give you the overall percentage of error free pages. I hope this software will be further maintained, I have not found other software yet that came any close to this set of time-saving features and I would love to keep this in my set of testing tools as long as possible.What are the most common errors on your website? The Website Validator crawls a website, runs the contents through an W3 HTML Validator, summarizing the content for you. No update in a long time and the developer does NOT respond to emails at all I tried twice. And i guess the engine does not understand HTML5 code at all.Īnd the developer does not seem to be active on this project. It has it's limits, though: It does not test CSS. That is very time-saving and less easy to overlook something. The best thing: It can scan complete local side with a bunch of files as batch processing in no time and we use it as quality check before we go online with something. So it can test also local files (which the online checker does not allow) and is so much faster than the web validator which sometimes is very un-responsive and slow. It uses the service from, but is is not a simple wrapper, it is a local version.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed